Breaking It Down: Triggering the Workflow on Pull Requests

The on: pull_request: The section tells GitHub to run this job anytime a PR is opened or updated that targets the main branch. This is the entry point to our automated quality control.

Step 1: Installing Dependencies (dbt deps)

After checking out the code and setting up Python, the first command we run is dbt deps. This installs all the packages your dbt project relies on, ensuring the environment is ready for the subsequent steps.

Step 2: Linting Your SQL with SQLFluff for Quality Control

Code quality and consistency are critical for a scalable data project. We use sqlfluff to automatically lint all our SQL files. This catches style inconsistencies, bad practices, and potential errors before they become a real problem. If the linter fails, the entire CI check fails, forcing the developer to fix their code.

Step 3: Running dbt build --select state:modified+ (Slim CI)

This is the heart of our CI pipeline and a dbt best practice known as “Slim CI”. Building and testing your entire dbt project on every single PR can be slow and expensive. Instead, we do something smarter.

The command dbt build --select state:modified+ tells dbt to only run and test the models that you’ve actually changed in your branch (state:modified), plus any models that depend on them downstream (+). This dramatically speeds up your pipeline. For more information, you can always refer to the official dbt documentation.

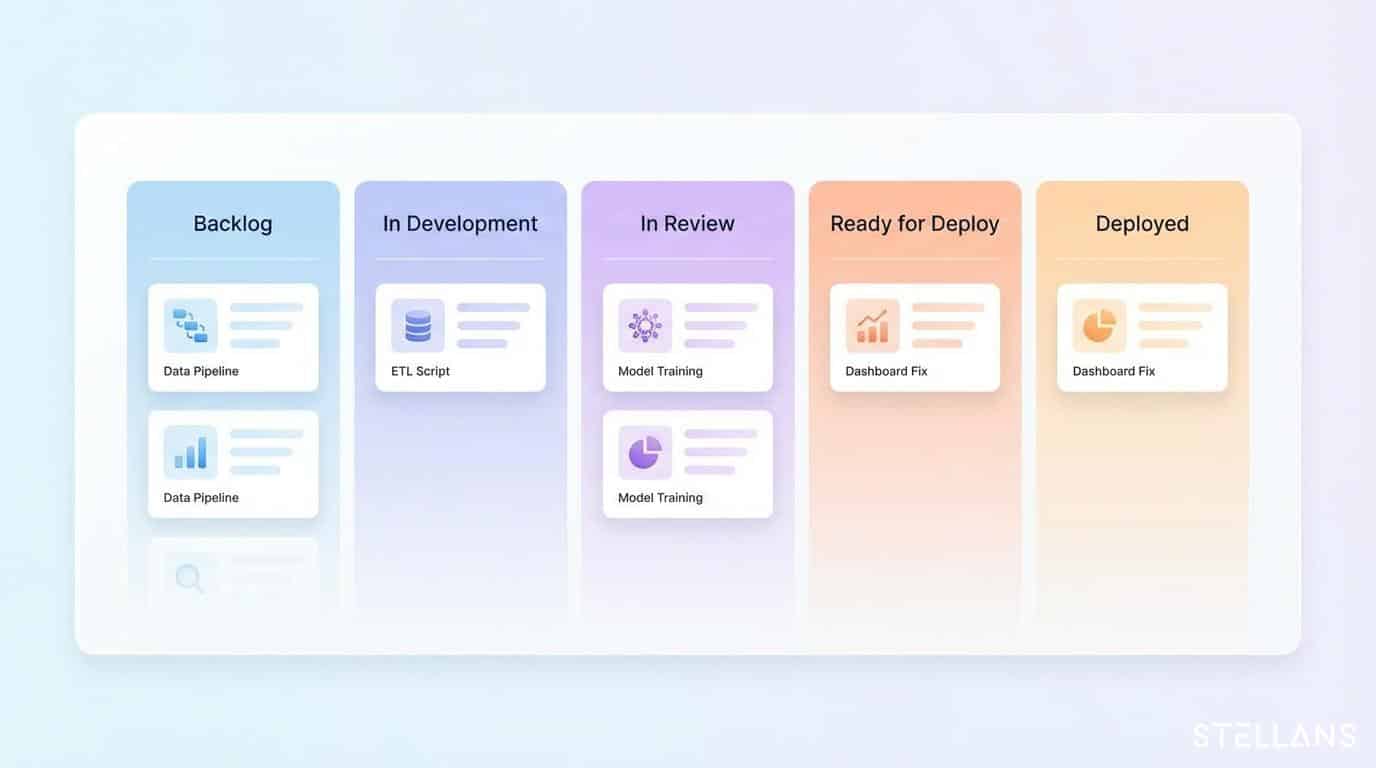

Step 4: Posting Automated Comments back to the PR

To close the feedback loop, the final step uses an action to post the results of the dbt build command directly as a comment on the pull request. This means your data analysts don’t have to dig through logs to see if their changes worked. The results are right there in the PR, making the review process transparent and efficient.