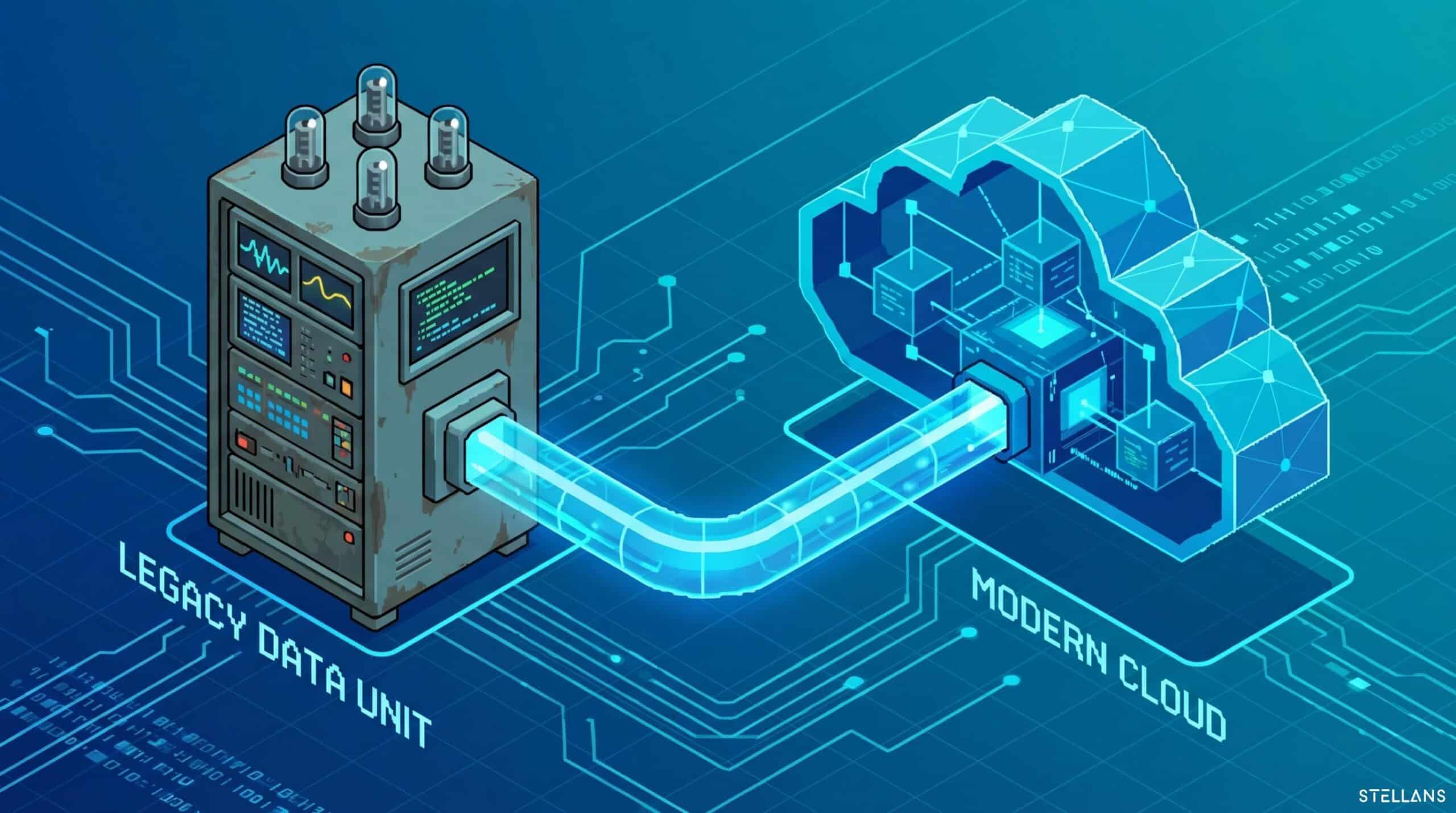

Transforming source data to align with the precise structure required by modern cloud platforms unlocks new analytics capabilities. Integrating records is necessary because a ten-year-old on-premise system stores customer data differently than a modern SaaS application. Bridging this structural gap is the primary triumph of exceptional data engineering. We view a data pipeline as a comprehensive highway: it relies on carefully managed toll-booth validations to keep data traffic flowing safely and reliably.

Strategies for Mapping Schema Differences

An established older system might utilize extensively nested data sets or highly denormalized wide tables. A new cloud ERP will expertly enforce strict API payloads and tightly normalized schemas. Mapping schema differences between these two software generations relies upon rigorous translation logic.

Our team completes this challenge through an organized architecture. We confidently land all raw source data into a standardized staging layer inside a cloud data warehouse. We focus entirely on extraction speed during the initial phase while respectfully reserving data formatting for the staging area. Once the raw data safely lands in the warehouse, we deploy dbt (data build tool) to upgrade the structures. We carefully write modular SQL scripts that join legacy tables, parse nested JSON arrays, and gracefully rename columns to match the target ERP requirements. This programmatic approach ensures that all logic is fully version-controlled and totally auditable.

Handling Data Type Mismatches Programmatically

Establishing perfectly matched data formats ensures continuous, healthy pipeline operations. Legacy systems frequently store dates as plain text strings. Older financial tables might log monetary values as floating-point numbers rather than precise numeric fields. By restructuring this initial data properly before interacting with a modern API, the target cloud platform perfectly processes the payload.

We prioritize resolving data type mismatches programmatically through our robust transformation layers. Our expert DBT models cast every column into its proper final format before the loading phase ever begins. We smoothly convert obscure legacy date strings into standard UTC timestamps. We enforce absolute decimal precision on currency fields to guarantee crystal-clear calculations. We implement smart custom accommodation rules for text strings formatted for the character limits of the new platform. By systematically optimizing these formatting details at the secure warehouse level, we guarantee a completely flawless execution during the final data injection process.