We can categorize the vast majority of business predictive models into a few core families based on the type of question you are trying to answer.

1. Regression Analysis (Predicting “How Much”)

Regression techniques serve as the workhorses of the analytics world. They are used when the outcome you want to predict is a continuous number, such as price, temperature, sales volume, or time.

Linear Regression

This form of predictive modeling stands out as the simplest and most widely used. Imagine a scatter plot of data points; linear regression attempts to draw the straight line that best fits through those points.

- How it works: It establishes a relationship between a dependent variable (what you want to know, like Sales) and one or more independent variables (input factors, like Ad Spend or Seasonality).

- Best Business Use Case: Forecasting sales revenue based on marketing budget; estimating the impact of a price change on demand.

- Pros: Highly interpretable. You can easily explain to a stakeholder: “For every $1,000 increase in ad spend, sales increase by $5,000.”

- Cons: It operates on a straight-line relationship. Complex or curved (non-linear) data requires more advanced models for accuracy.

Logistic Regression

Despite its name, Logistic Regression describes a method for classification, not predicting a continuous number. It predicts the probability of an event happening.

- How it works: Instead of a straight line, it fits an “S” shaped curve to the data, outputting a value between 0 and 1. This is typically converted into a binary outcome (Yes/No).

- Best Business Use Case: Predicting Customer Churn (Will they leave? Yes/No); Lead Scoring (Is this lead likely to convert?); Credit Default (Will they pay back the loan?).

- Pros: It provides a probability percentage, which is often more useful than a simple Yes/No. For example, knowing a customer has a 92% risk of leaving triggers a more urgent response than a 51% risk.

2. Classification Algorithms (Predicting “Which One”)

When the output isn’t a number but a category, you are in the realm of classification. This answers questions like “Is this email Spam or not?” or “Is this transaction Fraudulent or legitimate?”

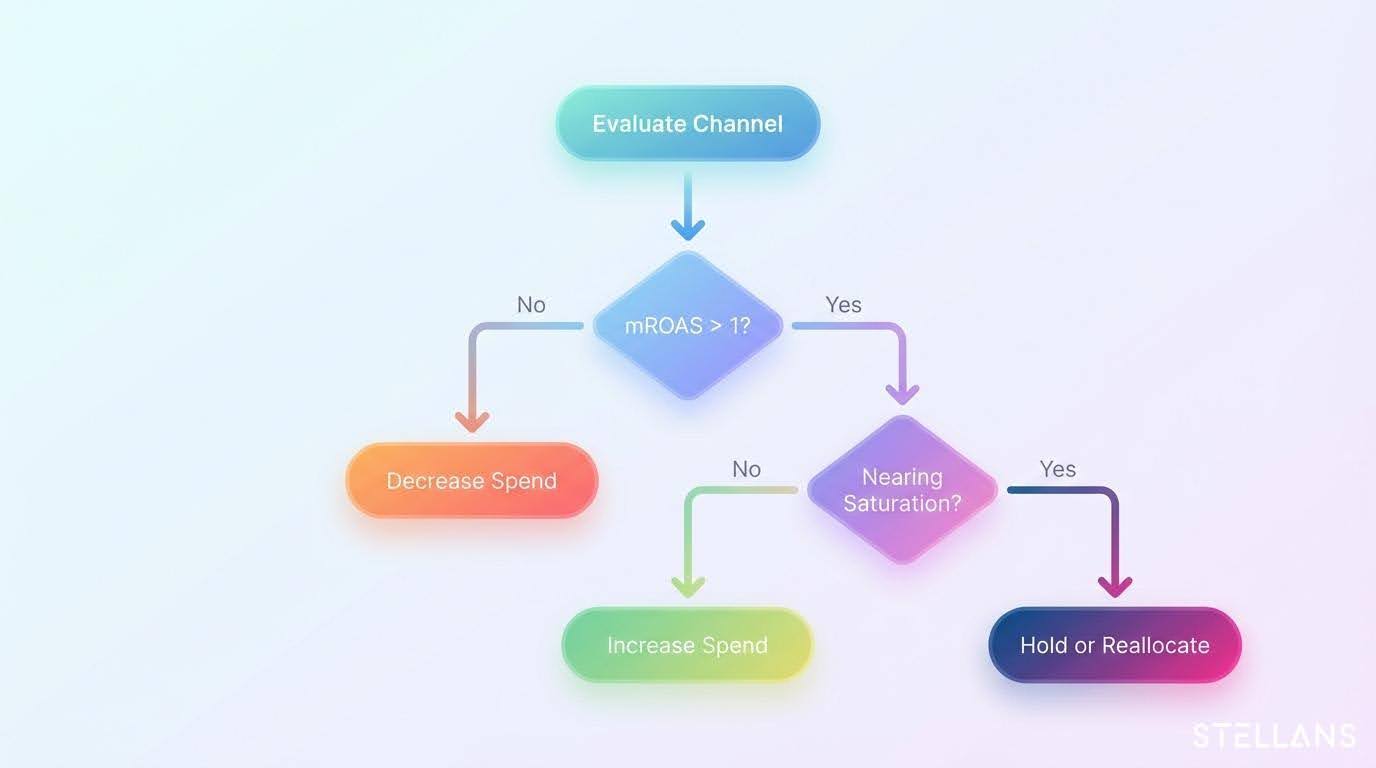

Decision Trees

A decision tree is exactly what it sounds like: a flowchart-like structure where the model asks a series of questions to conclude.

- How it works: The model splits data into branches based on rules. For example, in loan approval:

- Question 1: Is income above $50,000? (If No -> Deny).

- Question 2: (If Yes) Is the credit score above 700? (If Yes -> Approve).

- Best Business Use Case: Operational decisions where “why” matters as much as “what.” Examples include loan approvals, triage in healthcare, or customer segmentation rules.

- Pros: Extremely easy to visualize and explain to non-technical stakeholders. It is not a “black box.”

- Cons: Susceptibility to “overfitting” exists. A single tree can memorize the training data too closely, making it bad at predicting future, unseen data.

Random Forest

Random Forests address the potential errors of a single decision tree by creating a “forest” of hundreds of random decision trees and averaging their results.

- How it works: It effectively crowdsources the decision. If 80 trees say “Fraud” and 20 say “Legit,” the model predicts “Fraud.”

- Best Business Use Case: Complex classification problems where accuracy is paramount, such as high-frequency trading decisions, complex fraud detection sequences, or predicting detailed customer preferences.

- Pros: Very high accuracy; handles messy data and missing values well.

- Cons: Interpretation requires effort. Visualizing 500 trees combined is difficult, making it a black box.

Gradient Boosting Machines (GBM/XGBoost)

Similar to Random Forest, this is an ensemble technique. However, instead of building trees randomly, it builds them sequentially. Each new tree tries to correct the errors of the previous one.

- Best Business Use Case: winning Kaggle competitions and high-stakes corporate prediction tasks like insurance pricing or highly specific recommendation engines.

3. Clustering Algorithms (Finding Hidden Patterns)

Clustering algorithms are “Unsupervised,” distinct from the methods above, where we know the answer we are looking for. The model is given data without specific labels and asked to find structure.

K-Means Clustering

- How it works: The algorithm groups data points into “K” clusters based on how similar they are to each other (distance).

- Best Business Use Case: Market Segmentation. You feed in customer purchase history and demographics, and the model auto-discovers groups—e.g., “Budget Conscious Students” vs. “High-Spending Boomers.”

- Pros: Great for discovery and strategy when you don’t know exactly what you are looking for.

4. Time Series Analysis (Forecasting Over Time)

Time series techniques deal specifically with data that is indexed by time (daily, monthly, quarterly). Time series analysis succeeds here by explicitly accounting for seasonality, whereas standard regression often fails because it misses cyclical patterns like sales rising in December.

ARIMA (AutoRegressive Integrated Moving Average)

- How it works: It uses lag features (what happened yesterday) to predict what will happen tomorrow, while smoothing out noise.

- Best Business Use Case: Supply chain management, predicting daily server traffic, stock market analysis.

Prophet (by Meta)

- How it works: Designed to handle real-world messiness like missing data, shift changes, and holidays better than ARIMA.

- Best Business Use Case: Retail inventory planning where holidays and weekends drive significant shifts in behavior.

5. Neural Networks (The Advanced Tier)

Neural networks attempt to mimic the human brain’s interconnected neuron structure. This is the foundation of “Deep Learning.”

- How it works: Data passes through multiple layers of nodes, each weighting the input differently to identify incredibly complex, non-linear patterns.

- Best Business Use Case: Unstructured data. If you need to analyze images (quality control in manufacturing), audio (call center sentiment analysis), or vast amounts of unstructured text.

- Pros: Unmatched accuracy for specific complex tasks (images/voice).

- Cons: High computational cost is a factor; requires huge datasets; completely uninterpretable (Black Box).