Discovering that your ad spend exceeded budget by 40% at 5 PM on a Friday is a nightmare no marketing team should face. Yet teams waste 5 to 8 hours weekly manually checking dashboards, often catching campaign issues only after significant ROI loss has occurred.

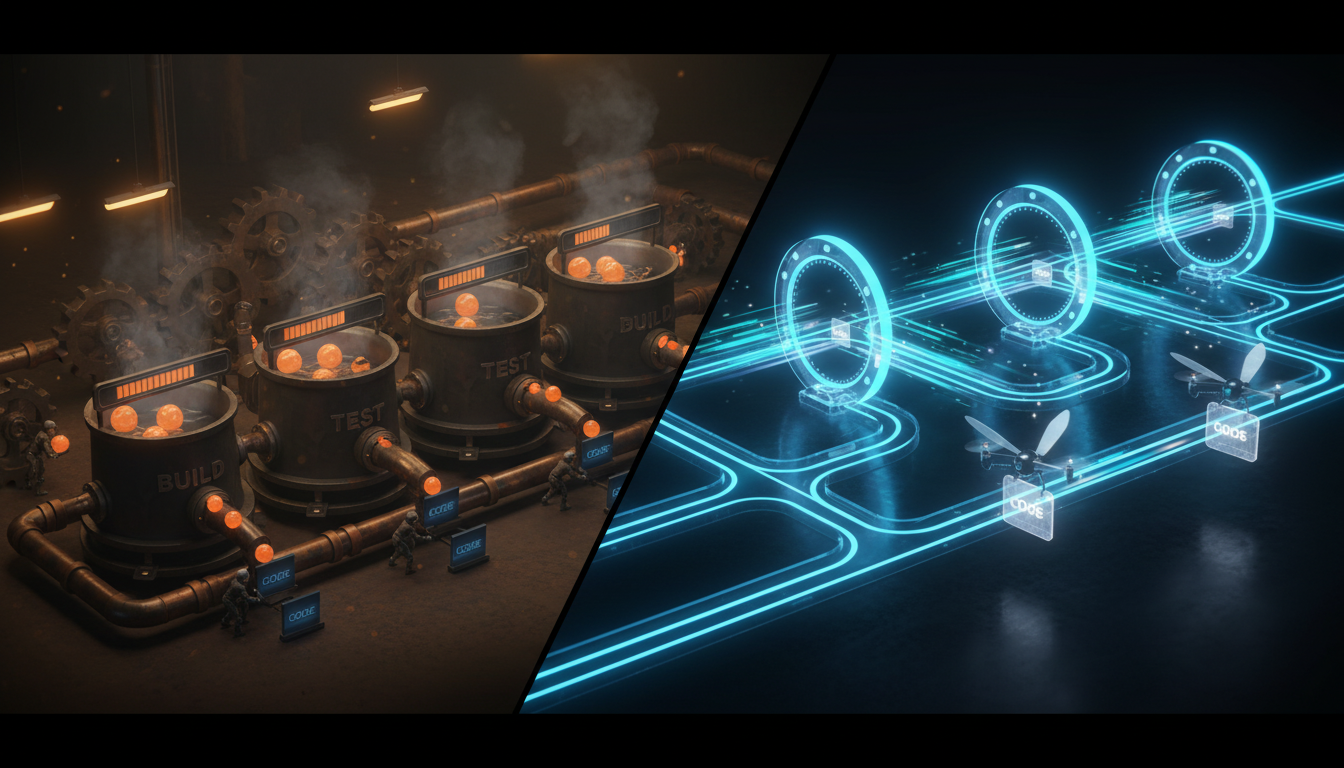

Data quality testing in dbt transforms this reactive firefighting into proactive protection. By implementing robust testing patterns for null values, accepted values, and relationships, you create a safety net that catches problems before they propagate to your reports and downstream decisions.

This guide walks you through three core dbt testing patterns specifically designed for marketing analytics teams. We go beyond basic syntax to show you how to integrate these tests with real-time Slack alerts, so your team receives instant notifications when daily ad spend crosses a limit, conversion tracking breaks, or attribution data fails integrity checks.

By the end of this article, you will have production-ready code snippets for dbt schema configurations, anomaly detection models, and webhook integrations that deliver alerts to Slack in under 2 minutes.