Most teams can whip up a “cool” lead scoring model. Trusted lead scoring services that Sales and Marketing depend on are far fewer. Formalized, clear, measurable Service Level Agreements (SLAs) close this gap. When SLAs clarify expectations, trust soars, fire drills drop, and analytics investments pay off.

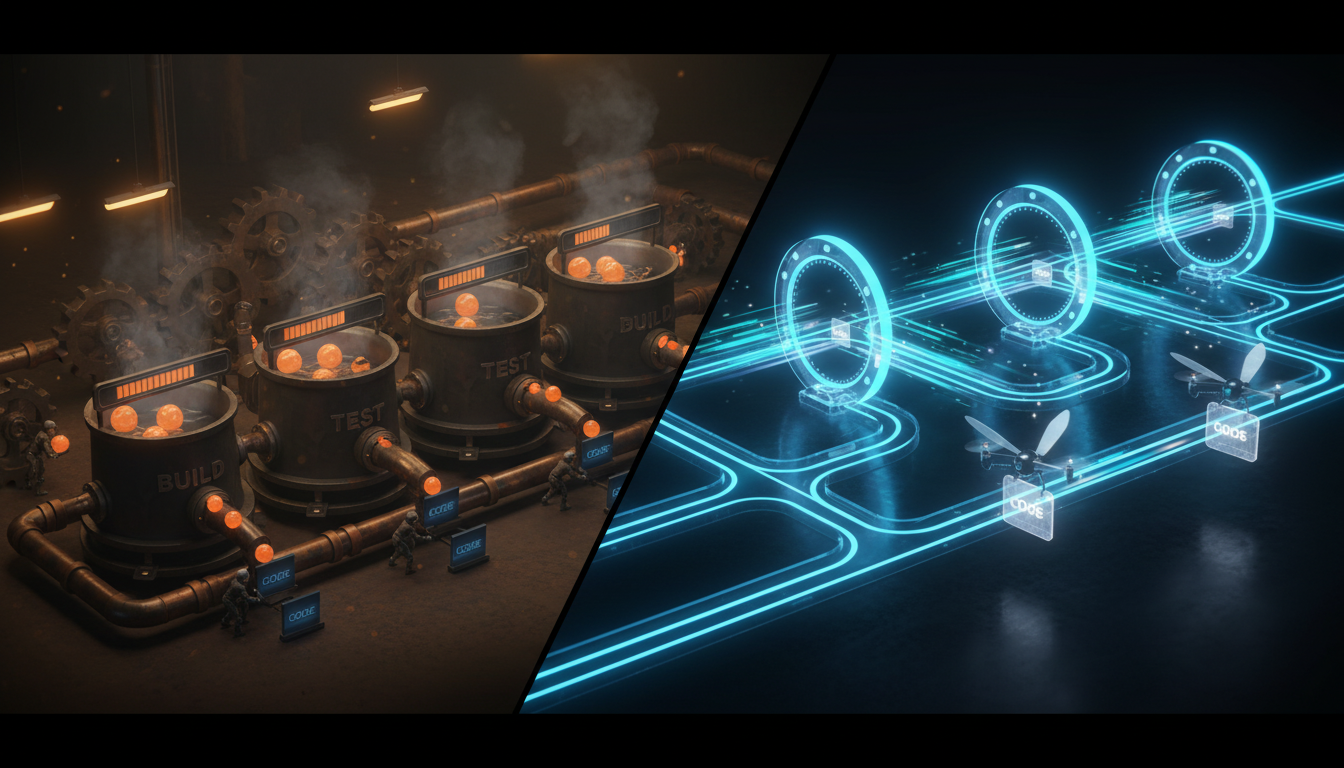

In this guide, we walk through building a predictive lead scoring pipeline—SQL, dbt, Python—all under a robust, stakeholder-focused SLA. You’ll get a walk-through, a sample SLA template, and a realistic approach to KPIs and team communication. Let’s ensure your data team is seen not as a ticket queue, but as a high-reliability partner for growth.