Organizations often explore streaming architectures eagerly. Achieving a working real-time pipeline requires understanding that streaming is technologically distinct from batch processing. Streaming fundamentals introduce new ways to manage state, time, and success. System architects thoroughly evaluate the complexity costs of streaming before committing.

Time Semantics and Out-of-Order Data

In a batch system, time remains relatively static. The data already rests in a storage layer. In a streaming system, time becomes highly fluid. Architects actively define exact time semantics.

Events generate at a specific moment (event time). However, they might arrive at the processing engine somewhat later (processing time). Network fluctuations or mobile connectivity variations cause data to arrive out of order.

To handle this, streaming engines use a concept called watermarks. A watermark tells the system exactly how long to wait for delayed data. Optimizing your wait time ensures low latency. Tuning your processing pace guarantees high accuracy. Balancing this tradeoff demonstrates deep distributed systems expertise.

State Management at Scale

Batch tasks operate efficiently in a stateless manner. They read a file, transform the contents, and write a new file. Streaming applications shine by being inherently stateful.

Consider a function that counts website visitors over a five-minute rolling window. The system reliably remembers the current count across continuous events. It stores this state perfectly in memory or on the local disk. If a streaming node restarts, the system recovers this state flawlessly.

Handling state recovery employs sophisticated checkpointing mechanisms. The infrastructure safely backs up the state to distributed storage periodically. This introduces intentional I/O activity. It also shapes the deployment process for new application versions to ensure stability.

Infrastructure and Upskilling Overhead

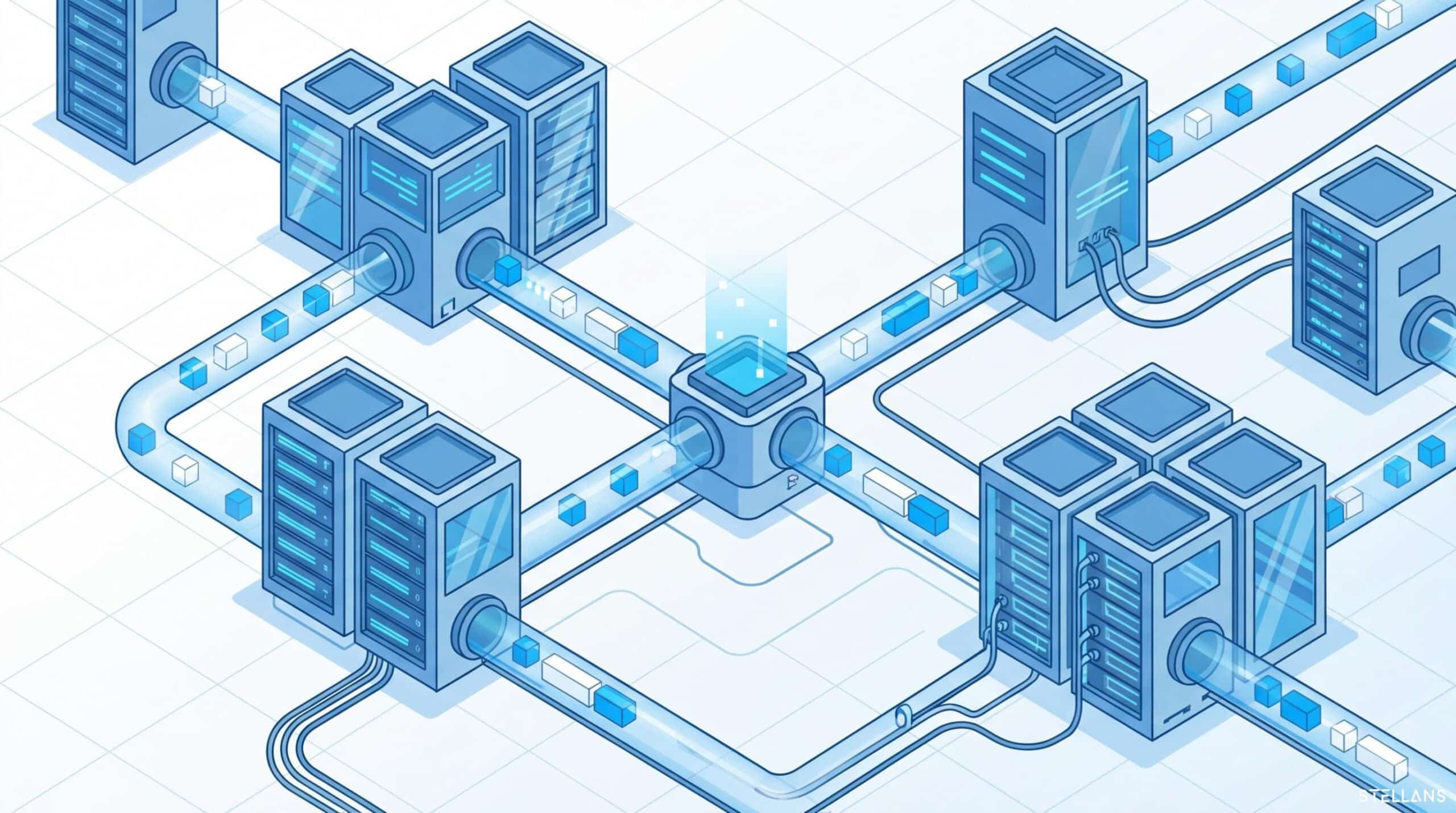

Streaming architecture thrives on highly specialized infrastructure. Orchestrating streams requires deploying distributed message brokers instead of simple cron jobs. You also maintain continuous processing engines like Apache Flink.

These clusters require focused operational oversight. They run constantly, meaning infrastructure resources work around the clock. Monitoring streaming systems introduces a fresh perspective compared to monitoring batch jobs. Teams successfully track consumer lag, backpressure, and JVM garbage collection optimization.

Our Consulting team helps organizations navigate these exact opportunities. We guide digital transformation, making sure technology choices align with business goals and long-term strategy. We assess your team’s readiness for streaming architecture. We then provide a clear roadmap to bridge the capability gaps successfully.